Index Bloat: Definition, Causes, and Impact on SEO Performance

Index bloat occurs when a website has too many low-value or irrelevant URLs indexed in search results. The problem is not the quantity of indexed pages—it’s their quality. When search engines index pages that offer little or no value to users, those pages consume crawl budget and dilute site authority without contributing to organic performance.

Common Sources of Index Bloat

Index bloat typically develops from specific technical and content issues:

- Tag pages that compete with category pages for the same keywords

- Faceted navigation that creates duplicate URLs through filter combinations

- Session IDs that generate unique URLs for each user visit

- Printer-friendly versions and stripped-down duplicates of existing content

- Internal search result pages, thank-you pages, and confirmation pages

- Expired product listings and out-of-stock items

- User-generated content that creates thin pages

- Auto-generated URLs from unlimited publishing systems

The problem develops gradually, making it easy to overlook until it affects performance.

How Index Bloat Damages SEO Performance

Index bloat weakens site performance through several interconnected mechanisms:

Crawl budget dilution: Googlebot wastes resources on non-priority URLs instead of crawling important pages. For large websites where crawl budget is limited, this becomes critical. When a significant portion of allocated resources is used on low-value pages, critical content may not be indexed as frequently as needed.

Keyword cannibalization: Important pages compete with weaker ones for the same search intent, confusing search engines about which page to rank. Neither page ranks well, or worse, a low-quality page outranks an authoritative one.

Quality signal degradation: Thin and duplicate content signals weaken overall site quality. Because Google’s helpful content system applies sitewide, low-quality indexed pages can negatively impact the entire site’s quality signal. Pages lacking original, useful, or in-depth content dilute the overall authority of the domain.

Authority dilution: Internal link equity spreads thin across useless URLs, meaning important pages receive less PageRank strength. This reduces crawl priority, lowers rankings for strong pages, and decreases visibility in competitive search results and featured snippets.

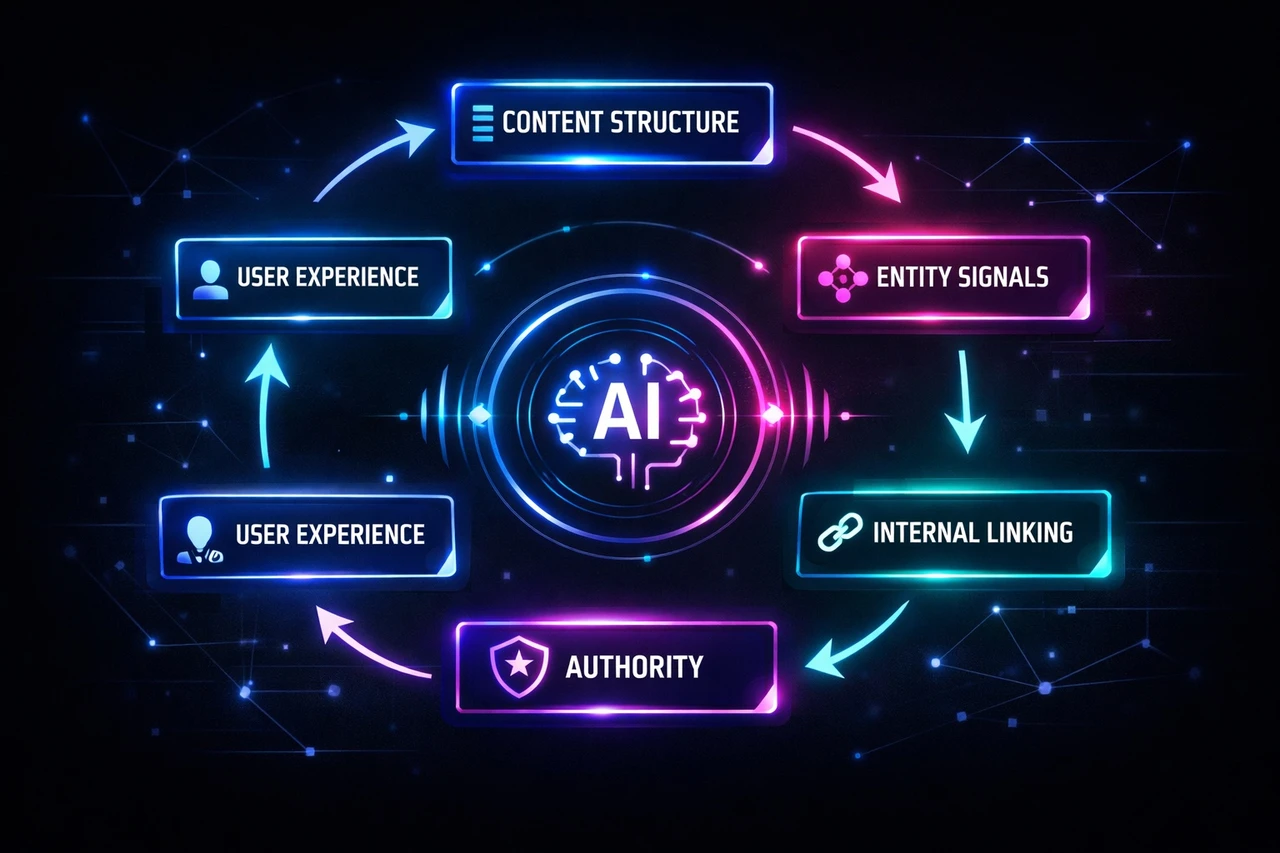

Indexation Control Framework: Crawling vs. Indexing

Managing indexation on large websites requires understanding how crawling and indexing controls interact. These are distinct mechanisms that work together:

Crawling controls determine which pages search engines can access:

– Robots.txt disallows: Block crawlers from accessing specific URLs or directories. More specific rules override general ones.

– Nofollow attributes: Function as hints rather than directives. Page-level nofollows suggest crawlers should not follow any links on that page; link-level nofollows apply to individual URLs.

– JavaScript: Can prevent crawling if it avoids using the <a> element with an href attribute, effectively hiding links from crawlers.

Indexing controls determine which pages appear in search results:

– Noindex tags: Implemented via robots meta tags or HTTP headers, these signal search engines to exclude a page from the index. The page must remain crawlable for the noindex directive to be recognized.

– Canonical tags: Identify the primary version of duplicate or similar pages, consolidating indexing signals without affecting crawl behavior.

– Password protection: Prevents both crawling and indexing, useful for staging environments.

Avoiding Common Control Conflicts

Combining controls incorrectly creates technical debt and unintended consequences:

- Noindex + robots.txt disallows: Prevents crawlers from seeing the noindex tag, potentially leaving pages indexed indefinitely.

- Canonicals + noindex: Sends contradictory signals. The noindex may transfer to the canonical URL, removing both pages from the index.

- Noindex without crawlability: Pages must remain crawlable for noindex directives to be recognized. External links to blocked URLs can trigger indexation without the noindex ever being read.

Monitor crawl status regularly through Google Search Console to identify these conflicts before they impact rankings.

Noindex: Strategic Exclusion for Improved Crawl Efficiency

When to Apply Noindex

The noindex meta tag prevents specific pages from appearing in search results while keeping them accessible to users. Apply it to pages that serve functional purposes but dilute SEO value:

- Admin panels and checkout flows

- Thank-you and cart pages

- Internal search result pages

- Duplicate content and parameter variations

These pages consume crawl budget without contributing to organic visibility.

Implementation

Add <meta name="robots" content="noindex, nofollow"> to the HTML head of pages you want excluded. For WordPress sites, plugins like Yoast allow you to toggle indexation status directly. Use tools like Screaming Frog to audit which pages currently carry noindex directives and verify implementation.

Long-Term Behavior

Noindex operates through a robots meta tag or HTTP response header. Both methods require the page to remain crawlable—search engines must access the page to read the noindex directive. This is a critical distinction: noindexed pages still get crawled. They consume crawl budget but don’t contribute to your index.

Google may eventually treat long-term noindexed URLs as nofollow, degrading link equity flow. Monitor noindexed pages regularly to ensure they’re achieving intended exclusion without creating unintended crawl inefficiencies.

Canonicalization: Consolidating Duplicate Content

What Canonical Tags Do

A canonical URL is the preferred version of a web page you want to rank in search engines. The canonical tag—an HTML element placed in the <head> section—tells search engines which URL to prioritize when multiple versions of similar content exist:

<link rel="canonical" href="https://www.example.com/article" />

Canonicalization is a soft solution. It suggests which URL to index without redirecting users, unlike a 301 redirect that permanently moves both users and search engines to a new location. Search engines treat canonical tags as a hint, not a directive, meaning they can disregard your suggestion if conflicting signals exist.

Why Canonicalization Matters

Duplicate content is pervasive. Without proper canonicalization, search engines waste crawl budget on redundant URLs, dilute link equity across duplicates, and may index the wrong version of your content.

Canonical tags consolidate link equity by funneling value from external and internal links to the preferred URL. This directly impacts PageRank and rankings. For large websites, canonicalization optimizes crawl budget by guiding Googlebot to prioritize valuable pages over duplicates created by URL parameters, trailing slashes, protocol variations, or pagination.

Implementation Best Practices

- Use absolute URLs and include self-referencing canonical tags on every indexable page, even without duplicate content.

- Canonical URLs must be set in original HTML, not injected via JavaScript, to avoid rendering mismatches.

- Ensure only one canonical tag exists per page, placed in the

<head>section. - Reinforce canonical signals by using consistent internal linking, including only canonical URLs in sitemaps, and avoiding conflicting directives.

- Audit canonical implementation using Google Search Console’s URL Inspection Tool or Screaming Frog to identify missing or conflicting tags at scale.

Crawl Budget: Allocation, Measurement, and Optimization

Understanding Crawl Budget

Crawl budget represents the time and resources search engine bots dedicate to your site, determined by crawl capacity limit and crawl demand. For large sites with 1M+ unique pages or medium sites with 10k+ rapidly changing pages, this becomes critical. Google allocates crawl budget based on site authority, server capacity, and historical crawl demand—but it doesn’t scale proportionately with website size.

When bots waste resources on low-value pages, duplicate content, or technical dead-ends, important content faces delayed indexation and missed ranking opportunities.

Identifying and Measuring Crawl Waste

Start by analyzing Google Search Console crawl stats and server log files to identify URL patterns consuming disproportionate resources. Common sources of waste include:

- Faceted navigation parameters

- Infinite scroll pagination

- Low-value automated pages like tag archives

- HTTP/HTTPS variations

- Development environments accidentally left crawlable

Calculate a crawl efficiency ratio: the percentage of crawl budget spent on high-value versus low-value pages.

Eliminating Waste Through Technical Controls

- Deploy strategic robots.txt rules to block non-indexable directories and parameters.

- Implement canonical tags for duplicate content and parameterized URLs.

- Use noindex tags for low-value pages that serve navigational functions but shouldn’t consume crawl budget.

- Configure URL parameter handling in Google Search Console.

- Refine XML sitemaps to include only prioritized pages—keep them accurate, segmented for large sites, and exclude low-value URLs.

- Optimize internal linking architecture to direct crawl budget toward important pages while reducing links to low-value content.

Technical Optimization for Efficiency

- Minimize resource usage for page rendering by prioritizing critical rendering paths, optimizing images with WebP and compression, and serving minified assets via CDN.

- Avoid cache-busting parameters by using versioning in filenames.

- Keep site architecture flat—maximum two clicks from homepage—and leverage HTTP/2 for faster page loads.

- Return proper 404 or 410 status codes for permanently removed pages and eliminate soft 404 errors.

- For JavaScript-heavy sites, pre-render pages into HTML to reduce the crawl budget needed for rendering and processing.

Case Study: Recovering from Indexation Collapse

A B2B industrial content site with over 300 well-structured articles experienced indexation suppression. Six to eight months after scaling content production, a large portion of pages dropped from the index. Remaining indexed pages showed near-zero impressions. There was no manual penalty or technical malfunction—just systematic suppression.

Root Cause

The diagnosis revealed a structural problem: every article followed a highly consistent framework. The content was accurate and well-organized, but that uniformity created a recognizable scaled-content pattern. Modern site-level quality classifiers are designed to identify exactly this kind of structural repetition, and they responded by reducing the site’s overall visibility weighting.

Content accuracy became an entry requirement, not a differentiator. Uniform patterns signal scaled production rather than unique expertise.

Recovery Framework

Recovery required three parallel actions:

- Structural content redesign: Rewriting high-priority articles to introduce distinct analytical frameworks and eliminate template patterns.

- Content consolidation: Pruning or merging lower-value pages to reduce structural similarity and concentrate authority.

- Authority signal reinforcement: Producing new content focused on insights that couldn’t be found elsewhere.

Early indicators included renewed crawl activity, stable indexation of revised content, and initial impression growth on updated URLs. The core lesson: site-level signals govern individual page visibility. More content using the same approach worsens the problem. Differentiation at scale, not volume, drives sustainable indexation control.

Implementing Indexation Control: Strategic Principles

Effective indexation control starts with operational clarity, not tool selection. Use robots.txt to manage crawler traffic, noindex for pages users need but search engines shouldn’t rank, and canonicals to consolidate duplicate variations.

Sitemaps and URL Discipline

Sitemaps should reflect editorial intent, not dump every URL. Exclude filtered states, tracking parameters, and thin utility pages. For large sites, faceted navigation requires governance: decide which filtered combinations deserve visibility and which should be canonicalized or disallowed.

Internal Linking and Content Architecture

Internal linking architecture signals relevance and priority; weak structures lead to slow discovery and diluted authority. Content pruning isn’t cleanup—it’s indexation governance that reduces structural waste and strengthens average quality.

Align your indexation strategy with business value. Ensure commercially important pages receive clear crawl paths, strong internal links, proper canonical signals, and sitemap inclusion. This operational clarity makes ranking decisions easier and helps search engines understand what actually matters on your site.

Maintaining Index Health: Systems and Monitoring

Build Preventive Systems

Effective indexation control requires proactive systems, not reactive fixes. Automation guardrails—such as noindexing page templates and managing sitemap generation at the CMS level—prevent low-value pages from entering the index in the first place. These structural controls ensure that search engines allocate crawl budget to strategically important pages rather than wasting resources on duplicate, thin, or parameterized content.

Regular Monitoring and Audits

Regular monitoring through the Pages report in Google Search Console identifies indexation issues before they compound. Quarterly index audits assess site performance, uncover content opportunities, and ensure alignment with business and marketing goals.

Fixing Existing Index Bloat

Fixing existing index bloat requires a combination of technical interventions:

- Remove internal links to unwanted content.

- Update robots.txt files and apply meta robots tags or X-Robots tags to prevent indexing.

- Use canonical tags to resolve duplicate content issues.

- Implement proper pagination practices—use a single master page with a canonical tag to prevent individual paginated pages from appearing as separate, thin content.

- Remove or consolidate poor-performing or outdated content that no longer serves user intent or business objectives.

- Use Google Search Console URL Removal Tool for immediate deindexing, but implement robots.txt or noindex tags for long-term prevention of re-indexation.

Conclusion: Proactive Indexation as a Competitive Advantage

Index bloat creates sitewide quality signals that affect overall SEO performance. Google’s Helpful Content system applies classifiers across entire domains, meaning bloated indexes can suppress rankings even for high-quality pages. AI-generated SERP summaries increasingly pull from well-ranking content, making good indexation control essential for visibility in these emerging search features.

Continuous monitoring and iteration of indexation strategies adapt to algorithm changes and shifting business priorities. Reducing index bloat strengthens digital presence, prevents crawl budget drain, and eliminates confusion from duplicate content that makes it difficult for search engines to determine which pages to rank.

Proactive indexation management ensures search engines focus resources on pages that drive business results. Need a customized SEO strategy for your business? Contact Scopic Studio’s professional team for free consultation.

Scopic Studios delivers exceptional and engaging content rooted in our expertise across marketing and creative services. Our team of talented writers and digital experts excel in transforming intricate concepts into captivating narratives tailored for diverse industries. We’re passionate about crafting content that not only resonates but also drives value across all digital platforms.