The Programmatic SEO Promise: Scaling Content, Avoiding Pitfalls

Why Programmatic SEO Exists

Programmatic SEO solves a resource problem: capturing search traffic across thousands of keyword variations without building each page manually. Instead of writing individual pages for every long-tail query, companies use templates and structured data to generate relevant, optimized pages at scale. This approach is essential when manual creation would be resource-prohibitive—particularly for businesses with large, repetitive datasets or diverse audience segments.

The model works best for SaaS companies targeting industry, use case, or integration combinations; marketplaces organizing product categories, brands, and price ranges; multi-location businesses serving every geographic market; and aggregators building comparison or directory sites. Examples include Yelp’s city and subcategory pages, Zapier’s integration pages, and Tripadvisor’s “Things to Do in [City]” guides. Each leverages automation to publish dozens to thousands of pages designed to rank for specific search intent.

The Core Advantage

Programmatic SEO delivers systematic coverage of long-tail search opportunities, consistent optimization across page sets, and efficient resource leverage. Once templates and data pipelines are established, page creation happens quickly—what would take months manually can be executed in moments. The approach also remains underutilized relative to traditional content strategies, creating a competitive advantage for early adopters.

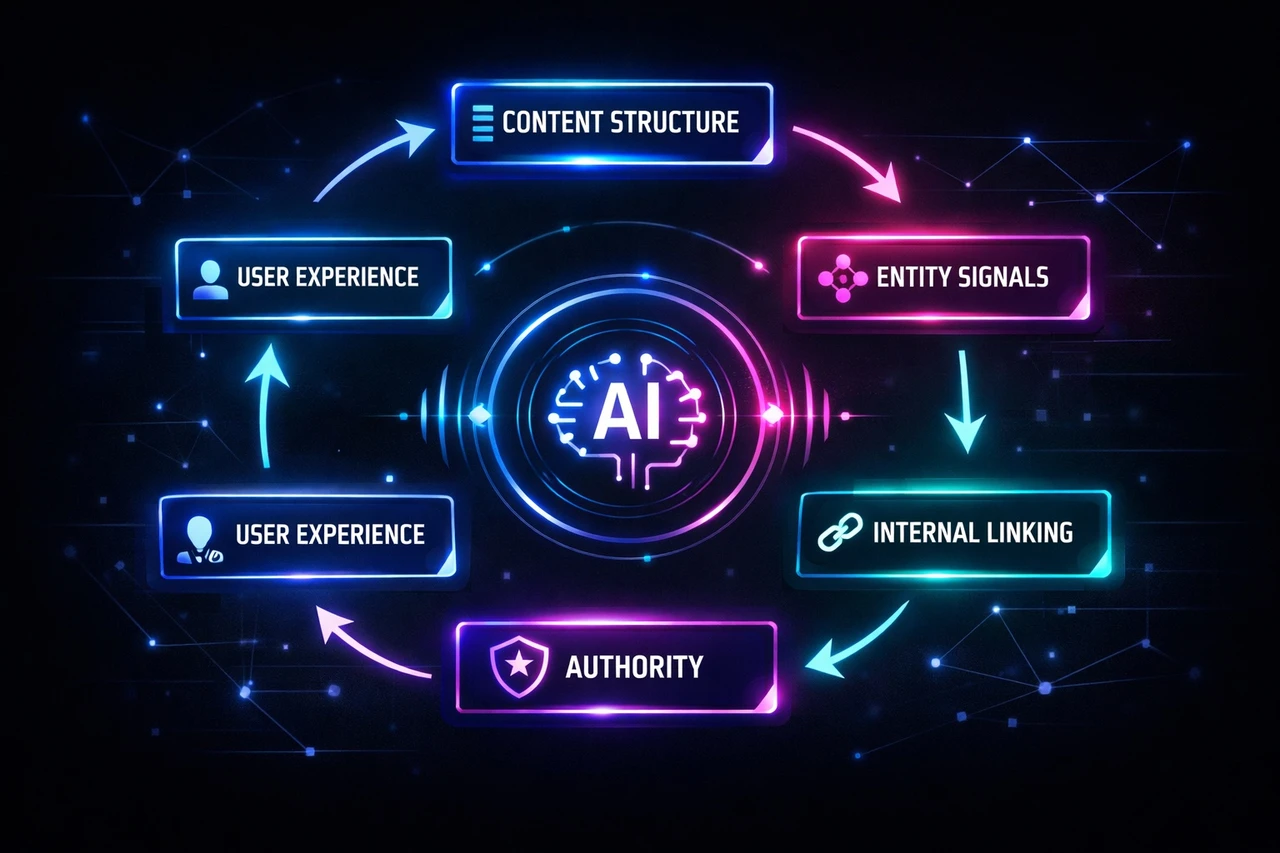

Success depends on three principles: relevance (targeting specific search intent), quality (unique data, insights, and positive user experience), and structure (consistent patterns that search engines can crawl and index efficiently). The most impactful implementations align with business goals, prioritizing templates with high conversion potential—bottom-funnel keywords like product comparison pages rather than broad awareness content.

The Technical Reality

The promise of scale comes with technical risk. Programmatic SEO introduces challenges around thin content, duplicate content, and crawl efficiency—issues that can trigger algorithmic suppression if pages fail to serve specific search intent with unique, actionable information. Avoiding these pitfalls requires deliberate technical SEO: internal linking strategies, clean URL structures, schema markup, and rigorous quality controls to ensure each generated page aligns with Google’s helpful content guidelines.

Understanding Duplicate and Thin Content

Programmatic SEO creates inherent risks that undermine organic visibility and search performance. When you generate hundreds or thousands of pages from templates and structured data, the line between scale and redundancy becomes critical.

Duplicate Content: Definition and Impact

Duplicate content refers to pages with identical or very similar text across different URLs. This manifests in two forms:

- Technical duplication: The same content becomes accessible at multiple URLs due to parameter variations, pagination, or session IDs.

- Content duplication: Different canonical URLs contain substantively similar text across pages, often from template reuse without sufficient data variation.

Search engines struggle to decide which duplicate page to index and rank, or whether to index them at all. Google does not impose a manual “duplicate content penalty” for normal cases, but algorithmic suppression occurs: search engine confusion, lower rankings from internal competition, and indexing issues that prevent pages from appearing in results altogether.

Thin Content: Definition and Consequences

Thin content refers to pages lacking unique value, decision-making information, or substantive differentiation from other pages on your site or the broader web. Common causes include insufficient unique data points, keyword variations without meaningful content variation, and template-based text reuse without localization or enrichment.

Research shows that 29% of websites face duplicate content issues. The operational consequences extend beyond rankings:

- Crawl budget waste: Search engines spend resources indexing redundant content instead of discovering valuable pages.

- Link equity dilution: External backlinks pointing to multiple versions of the same content split ranking signals.

- User experience degradation: Visitors encounter repetitive pages that fail to provide distinct value.

- AI system filtering: LLMs using Retrieval-Augmented Generation apply trust-scoring logic that may filter out duplicate-heavy domains as low-quality sources, reducing citation rates in AI-generated responses.

If programmatic pages don’t offer something unique, they probably won’t index or rank.

Crafting Unique Templates: The Foundation for Scalable, Quality Content

A programmatic SEO template is not a shortcut—it’s a product. The best templates start with a clear user job-to-be-done and build structure around genuinely answering it. Success depends on treating your template as the foundation of every generated page, which means 40–60% of content must be unique per page to avoid duplicate content flags from Google.

Start with Intent, Not Structure

Solid templates begin with intent mapping. Define the primary and secondary intents for each page type before designing layout or copy. Templates should function as “evidence blocks”—providing enough information for users to act without opening multiple tabs. That means including definition, decision factors, data-backed comparison, and action guidance in every template section.

Engineer Uniqueness into the System

Uniqueness in programmatic SEO is about materially changing information page-to-page, not swapping adjectives. The non-template part of the page must carry decision value: original data, specific analysis, or localized explanations. Measure uniqueness through similarity checks on rendered HTML and main content, with thresholds set by page type.

Use repeatable “unique modules”—methodology boxes, comparison tables, common mistakes sections, expert notes, mini FAQs—to create value without extensive manual rewrites. Build a publish gate that checks content completeness: word count, number of non-empty unique modules, and presence of entity-dependent tables or lists.

Build Robust Data Models

Your data model determines template quality. Include qualifiers that change decisions—service type, coverage area, lead time—beyond basic attributes. Add a confidence layer to your datasets with metadata for source, timestamp, and reliability score. Adjust language or hide fields when data is unreliable. Store relationships (brand → models, city → neighborhoods) to enable natural internal linking and richer context.

Spot-check sampled pages to ensure they answer real reader questions. Templates are both an HTML blueprint and a set of content rules. Operational safeguards—versioning, staging environment testing, incremental rollouts—prevent large-scale errors and protect your index health.

Technical Safeguards: Quality Gates, Indexing Controls, and Monitoring

Programmatic SEO relies on templates and automation to generate large volumes of pages. Without safeguards, this approach creates duplicate content and thin content at scale, both stemming from template reuse without sufficient data variation. Both degrade crawl efficiency and indexation quality.

Set Quality Thresholds Before Generation

Prevention begins at the generation stage. Define minimum requirements for each page: a distinct search intent, a set number of unique data points, supporting context, and meaningful internal links. Exclude low-value combinations—such as sparse filter pages or parameter variants—before they enter the system. Pages that don’t meet these thresholds should not be created, not merely hidden after the fact.

Apply Indexing Controls and Consolidation

Even with quality gates, edge cases emerge. Use indexing directives strategically:

- Noindex: Pages that serve users but shouldn’t appear in search (low-demand variants, thin filters).

- Canonical tags: Signal the preferred version for near-duplicates to consolidate ranking signals.

- Robots.txt: Block crawl-heavy paths like faceted navigation to protect crawl budget.

- Redirects or deletion: Remove obsolete pages rather than leaving them indexed.

- Sitemaps: Include only pages you want search engines to prioritize—not every generated URL.

Google does not penalize duplicate content unless there’s manipulative intent—scraping, doorway pages, or cloaking. Algorithmic filtering simply hides redundant results. The risk is wasted crawl budget and lost visibility, not a manual action.

Monitor Indexation and Crawl Quality

Track index coverage, crawl stats, and excluded pages in Google Search Console. Watch for symptoms like pages discovered but not indexed, indexed pages with low impressions, or crawl spikes on parameterized URLs. Regular audits identify content cannibalization and indexation bloat early. The goal is not maximum pages indexed, but maximum value per indexed page.

To direct crawlers more effectively:

- Place programmatic pages close to the homepage by linking from navigation or footer elements.

- Create hub pages that organize and link to programmatic content clusters.

- Resolve 404 errors promptly and improve page speed to signal site quality.

- Use dynamic robots.txt to disallow unwanted content.

Page speed doesn’t directly influence rankings, but it impacts bounce rate and user experience—metrics that search engines interpret as quality signals. Optimize loading times by minimizing animation, embedding video instead of GIFs, lazy loading images, compressing assets, removing redundant data, and keeping your CMS and plugins updated. We prepared a technical SEO checklist for our readers as well.

Quality Control Framework: Pre-Publication and Ongoing Validation

Running programmatic SEO at scale means publishing hundreds or thousands of pages—and without systematic quality control, you’ll ship duplicate content, thin pages, and technical errors that erode rankings. A quality control framework catches issues before publication and maintains standards as your catalog grows.

Pre-Publication Validation Systems

Build automated checks into your content pipeline before pages go live:

- Semantic duplicate detection: Use content embeddings and cosine similarity to identify pages that say the same thing in different words, then flag them for consolidation or differentiation.

- Automated fact-checking: Validate claims against trusted data sources and queue unverified content for manual review, preventing outdated information from publishing.

- Technical SEO validation: Catch meta tag problems, internal linking errors, and schema markup issues in staging. Use Python libraries like BeautifulSoup to confirm H1 tags are unique, validate schema markup, and ensure meta descriptions stay between 150-160 characters.

- Readability scoring: Evaluate sentence variety, paragraph structure, and logical flow—not just Flesch-Kincaid scores—to flag content that needs rewriting before it reaches users.

- Placeholder detection: Use regex or XPath to identify unfilled template variables before publication.

Ongoing Health Monitoring

After publication, implement a 4-step health check:

- Data integrity validation: Titles at least 15 characters, descriptions at least 80, body at least 300 words.

- Index status monitoring: Use Google Search Console API to catch exclusion reasons like “Duplicate without canonical.”

- Content freshness assessment: Track organic traffic and ranking changes to identify stale or underperforming pages.

- Quality scoring: Prioritize pages for maintenance or deletion based on performance.

Run weekly signal monitoring (30 minutes) and monthly deep audits (2-3 hours). Set alert thresholds based on historical data—traffic drops exceeding 15% for two consecutive weeks, rankings falling out of the top 10, or excluded pages increasing more than 10%. Use tools like Screaming Frog to map URLs and spot patterns, then employ textual metrics (Python’s difflib for similarity scores above 70%) to identify duplicates. Pages under 300 words of unique text get priority for rewriting or consolidation.

Avoid common mistakes: don’t set thresholds too strictly, cache validation results to avoid performance bottlenecks, and track false positives to refine your rules over time.

Real-World Examples: Programmatic SEO in Practice

Integration and Comparison Platforms

Zapier demonstrates programmatic SEO at scale, building over 590K integration pages that target specific use cases like “Google Sheets and Notion integration.” The /apps/ directory alone drives substantial organic traffic by solving specific automation problems with proprietary data. G2 takes a similar approach with comparison and review pages, generating high-volume traffic through programmatically created pages for high-intent searches like “Compare Asana and monday.com” or “Best CRM software,” all powered by structured review data.

ProductHunt optimizes for discovery by creating landing pages for each product alongside “alternatives” pages, capturing users actively searching for new tools or competitive options. This approach works because it addresses explicit search intent at the moment users are evaluating solutions.

Vertical-Specific Applications

B2B companies in specialized verticals achieve strong results by building pages around their core data. Wise creates dynamic currency conversion pages with live rates, charts, and CTAs for long-tail keywords like “currency-converter/aud-to-eur-rate,” enriched with comparisons and FAQs. Freightify applies the same logic to logistics, building country-specific freight rate pages with dynamic charts and real-time quote CTAs that attract logistics companies searching for specific routes.

Atlassian ranks for long-tail queries by creating dedicated landing pages for specific use cases like “Jira for agile project management,” each including benefits, examples, and FAQs tied to their tools. DelightChat targets “Best Shopify [Category] Apps” searches, generating pages with clear titles, category descriptions, app names, logos, and features—driving consistent organic traffic with individual pages bringing measurable visits per month.

Content and Demo-Led Growth

Storylane built thousands of interactive demo pages targeting long-tail searches like “how to build a Salesforce demo” or “how to curve text in Canva,” now ranking for nearly 60,000 organic keywords. They target both high-volume queries around popular tools and “quiet keywords” for less popular tools, building topical authority across the board.

Concurate’s work with a patent drawings SaaS client created jurisdiction-specific content clusters that resulted in 10 of 13 blogs appearing in Google’s AI Overviews and qualified leads within four months. For an edtech client, they built location-based training pages from a single framework, generating inbound from organizations with large employee bases.

Monitoring and Continuous Improvement

Programmatic SEO requires ongoing maintenance and quality monitoring to prevent content or keyword cannibalization that can undermine individual asset rankings. Regular audits are essential for identifying programmatic SEO issues before they escalate into indexation challenges or duplicate content problems at scale.

Establishing Quality Assurance Checkpoints

A programmatic SEO content QA process combines automated tests and human reviews to validate pages generated from templates or bulk content systems. QA becomes essential when content volume exceeds manual review capacity—typically between 1,000 and 5,000 pages. Prioritize checks by severity:

- Critical issues: HTTP 200 status, noindex flags, unique title and meta descriptions, canonical tags, and schema properties.

- Major checks: Internal linking, content length above 200 words, and mobile rendering.

- Minor optimizations: Title and meta length and alt attributes.

Pre-publish checks catch deterministic errors like missing canonicals, placeholders, and schema syntax issues using CI-style gates. Post-publish monitoring tracks runtime issues such as indexing behavior, search snippets, and rendering differences. Automated content-level tests detect placeholders through regex or XPath, identify duplication using shingling or MinHash algorithms, and flag thin content below 150–200 words.

Tracking Performance Metrics and Indexation Health

Use indexation quality assurance tools like Google Search Console and Semrush to monitor crawl errors, duplicate content warnings, or pages excluded due to quality concerns. Key metrics include indexed rate, click-through rate, engagement metrics such as scroll depth and time on page, and conversion attribution.

Automated alerts should trigger for large jumps in 4xx or 5xx rates, canonical changes, or sudden drops in impressions. Target an indexation ratio of at least 80% within two weeks for new content rollouts. Systematic tracking through GA4 for template-level analysis and Looker Studio for segmented dashboards enables continuous monitoring to identify successful templates and retire underperforming content.

Scaling Safely: Strategic Implementation

Treat Every URL as a Product Decision

Programmatic SEO offers opportunities to capture long-tail search traffic at scale, but success hinges on prioritizing quality and user value over sheer volume. Every programmatic URL should be treated as a product that must earn its place in the index. This means implementing strict eligibility rules before publishing:

- 1 intent per URL.

- Minimum uniqueness blocks: at least 3 unique content blocks per URL that are not shared verbatim.

- Quality gates including similarity checks and intent validation.

Build Systems That Prevent Thin Content

Thin content is often a systems bug, not a writing bug. It stems from bad eligibility rules, weak uniqueness requirements, lack of quality gates, and no pruning loop. Successful implementations begin with a small set of proven templates and then scale systematically based on performance data.

- Use constraint-first writing to make content specific.

- Avoid doorway clusters by finalizing keywords that are not very similar.

- Separate “indexable” from “helpful” variants using canonical tags or noindex for low-demand variations.

Monitor, Prune, and Optimize Continuously

Continuous monitoring of performance is essential to identify and optimize successful templates and retire underperforming content. Implement a pruning loop every 60-90 days to review pages that haven’t earned traffic or engagement. Options include upgrading content with more unique data, noindexing thin variants, consolidating similar pages, or removing them entirely.

Drip-publishing pages gradually can make the process appear more natural to search engines than publishing all at once, while batch publishing caps prevent overwhelming your crawl budget.

Key Takeaways

The focus should be on creating high-quality programmatic content that genuinely assists searchers in achieving specific goals, rather than merely targeting keyword variations. Companies that succeed in competitive search landscapes create more relevant, useful content that search engines favor.

Start with high-intent templates for bottom-funnel keywords to demonstrate clear business value. Quality controls, including human review and automated checks, remain the foundation of any programmatic SEO strategy that scales without risk.

Scopic Studios delivers exceptional and engaging content rooted in our expertise across marketing and creative services. Our team of talented writers and digital experts excel in transforming intricate concepts into captivating narratives tailored for diverse industries. We’re passionate about crafting content that not only resonates but also drives value across all digital platforms.