Introduction to Technical SEO in 2026

Why Technical SEO Matters More Than Ever

Technical SEO has become the bedrock of any successful website strategy. It’s not just about optimizing content anymore—technical SEO audits are now an essential part of ongoing site maintenance, ensuring your website remains competitive in an increasingly complex digital landscape. Think of it as the foundation of your entire SEO house: without it, everything else crumbles.

At its core, a technical SEO audit analyzes the technical aspects of your website to ensure search engines like Google, Bing, and Yahoo can effectively crawl, index, and rank your pages. But here’s what makes 2026 different—the web is no longer just visited by human users. AI agents now browse your site to extract specific information, fundamentally changing how we think about website maintenance. Your site must function as a reliable data source for the decentralized web.

The Modern Technical SEO Landscape

The definition of “search” itself has expanded dramatically. Users aren’t just Googling anymore—they’re prompting ChatGPT, asking Perplexity questions, and discovering content on TikTok. This means your technical architecture must serve data to multiple endpoints simultaneously. With AI Overviews triggering for approximately 18.57% of commercial queries, becoming a citation source for AI-synthesized answers is now crucial for e-commerce brands.

A comprehensive technical audit identifies and resolves issues that could undermine your search performance—from crawlability and indexation problems to site speed, mobile usability, security concerns, duplicate content, and navigation issues. These audits ensure nothing hinders your optimization efforts, allowing search engines to better understand and rank your content.

Taking Action on Technical Issues

Technical strategy only creates value when it translates into measurable business outcomes. For example, in our work with two competitive Boston restaurants, we built a structured digital ecosystem that doubled reach and increased follower growth by 96% over three years—without increasing ad spend. Smart architecture and channel alignment consistently outperform brute-force budgets.

The good news? Even minor technical fixes identified during an audit can significantly improve your rankings. “Technical Entity Management” has emerged as a critical discipline in 2026, especially with tightened indexing thresholds for AI-generated content. Pages must immediately signal quality and uniqueness to avoid being overlooked by answer engines that prioritize fast, structured data.

We recommend performing an initial audit on new sites, then conducting quarterly reviews—especially if you’re continuously publishing new content. If your rankings have stagnated or declined, an audit should be your first move. With discovery now happening across vertical platforms like Amazon and YouTube, alongside traditional search, technical excellence is no longer optional—it’s essential.

Advanced Crawlability and Bot Governance

Strategic Bot Management and Robots.txt Optimization

Bot governance has become a critical component of technical SEO strategy. Rather than treating all crawlers equally, you should now differentiate between beneficial retrieval agents and non-beneficial training scrapers through your robots.txt file.

OpenAI’s retrieval agent (OAI-SearchBot) surfaces content in ChatGPT’s “Search” feature, so allowing this bot ensures your content gains visibility in AI-powered search results. Conversely, GPTBot—OpenAI’s training scraper—should typically be blocked to prevent your data from training future models, though this doesn’t affect current search visibility. Google-Extended presents a strategic decision: allowing it can improve visibility in Gemini-powered answers through Vertex AI, but blocking it prevents data from training Google’s models.

A recommended robots.txt approach for e-commerce sites includes: allowing OAI-SearchBot across your entire site, while disallowing both GPTBot and Google-Extended. This balanced strategy protects your proprietary data while maximizing visibility in emerging AI search channels.

Crawl Quality and HTTP Status Code Management

Google’s December 2025 rendering shift clarifies that pages returning non-200 status codes (4xx or 5xx errors) may be excluded from the rendering queue entirely. This creates a critical vulnerability for single-page applications (SPAs) that serve a 200 OK shell for 404 pages—search engines may interpret these as thin, indexable pages and later de-index them.

“Invisible 500 errors” represent another hidden threat. These occur when server errors are caught client-side, serving a 200 OK status with an error message. Log file analysis remains your most reliable source of truth for identifying these bot interaction issues before they impact indexation.

Mobile-first verification is equally essential. Best practice dictates verifying 100% of high-value crawls using the Googlebot Smartphone user agent, as hidden mobile navigation (hamburger menus not in DOM until clicked) can conceal your site structure from Google’s primary crawler.

Index Budget and URL Parameter Strategy

Managing your index budget—the ratio of quality pages to total pages—is as critical as managing crawl budget. For e-commerce sites, faceted navigation filters create a “combinatorial explosion” of low-value URLs that waste crawl resources.

Implement a crawlability matrix: broad categories should be indexed and followed, specific filter combinations with unique H1 tags warrant indexing, granular filters should be canonicalized to category roots, and sort/session parameters should be blocked via robots.txt. Permanently out-of-stock items should return 404/410 status codes, while temporarily unavailable products should remain live with server-side rendered recommendations.

Strategic pruning—intentionally removing or blocking low-quality pages—concentrates link equity on high-performing assets. Additionally, implementing IndexNow allows instant notification of URL changes across Bing, Yandex, and ChatGPT, proving crucial for rapidly changing inventory.

Indexing Strategy and URL Management

Building an Indexing-First Approach

Gone are the days when a simple 200 OK status code guaranteed search engine visibility. Today’s indexing landscape demands that pages signal quality and uniqueness from the moment crawlers arrive. Search engines have tightened indexing thresholds due to the proliferation of AI-generated content, making it critical to distinguish your pages as valuable assets rather than thin duplicates.

Your indexing strategy must balance crawl budget with what we call “index budget”—the ratio of quality pages that search engines deem worthy of retention versus your total page count. This distinction matters enormously for large sites. Rather than maximizing crawled pages, focus on maximizing the percentage of crawled pages that actually deserve indexing. This is where intentional pruning comes in: regularly audit and remove low-traffic pages, outdated products, and thin content to concentrate link equity on your best-performing assets.

Managing URL Parameters and Faceted Navigation

E-commerce sites face a unique challenge: faceted navigation (filters like size, color, and price) can create a “combinatorial explosion” of low-value URLs that drain crawl budget without adding real value. Implement a crawlability matrix for parameters to categorize your URLs strategically:

- Broad categories (e.g., /womens-shoes): Index and follow these with full SEO treatment.

- Specific filters (e.g., /womens-shoes/red): Index with unique H1 tags and meta descriptions to avoid duplicate content penalties.

- Granular filters (e.g., /womens-shoes?size=9&width=wide): Canonicalize to the category root or use noindex to consolidate authority.

- Sort and session parameters (e.g., ?sort=price_asc): Block entirely via robots.txt to preserve crawl budget for pages that matter.

Handling Soft 404s and HTTP Status Codes

A critical but often overlooked issue: pages returning a 200 OK status with an “unavailable” message are “soft 404s” that degrade site quality. Google’s December 2025 rendering update clarified that pages serving non-200 HTTP status codes may be excluded from the rendering queue entirely.

For permanently gone products, return a proper 404 or 410 status code. For temporarily out-of-stock items, keep the page live (200 OK) but ensure recommended or similar products are server-side rendered to provide crawlable value. This distinction prevents wasting crawl budget on pages that offer no real content to users or search engines.

Hreflang and IndexNow Implementation

International sites must implement hreflang tags with strict integrity: every page needs a self-referencing hreflang tag, and if Page A links to Page B as an alternate, Page B must link back to Page A. Include an x-default tag for unmatched user browser settings.

For rapidly changing inventory, leverage IndexNow (supported by Bing, Yandex, and ChatGPT) to push instant notifications when URLs are added, updated, or deleted—a game-changer for e-commerce freshness signals.

JavaScript SEO and Rendering Engineering

Understanding the Rendering Challenge

JavaScript rendering is arguably the most underaudited pillar of technical SEO, yet it’s where many sites stumble silently. The critical issue: server-side rendered HTML and client-side rendered HTML often diverge in ways that are invisible to the naked eye but catastrophic for indexing. With Googlebot’s rendering queue introducing delays of 3-7 days, JavaScript-dependent content may never be indexed at all without explicit rendering strategies.

The stakes got higher in December 2025 when Google clarified that pages returning non-200 HTTP status codes (like 4xx or 5xx) may be excluded from the rendering queue entirely. This is a particular risk for Single Page Applications (SPAs) that serve a generic 200 OK shell for error pages, or when a 404 header prevents client-side JavaScript from rendering helpful content.

Critical Content Must Live in Raw HTML

Here’s the non-negotiable rule: critical content—headings, body text, internal links, and structured data—must be present in your raw HTML without JavaScript execution. Use Google Search Console’s URL Inspection Tool to compare the rendered HTML Googlebot sees against your page’s raw HTML source. Content, links, or schema appearing only in the rendered view is JavaScript-dependent and at indexing risk.

Test this yourself by disabling JavaScript in Chrome DevTools or other relevant SEO tools. If your content disappears, you have a problem. Internal navigation links should use standard href anchor tags, not JavaScript router pushes without HTML fallbacks. Similarly, JSON-LD structured data should be rendered in the initial server response, not injected by JavaScript. Meta tags (title, description, robots, canonical) must also be present in the raw HTML—those set by JavaScript may not be processed by Googlebot.

Choosing the Right Rendering Architecture

For e-commerce in 2026, ISR (Incremental Static Regeneration) is the preferred architecture, offering the speed of static sites with the freshness of dynamic ones. Client-Side Rendering (CSR) is a liability for Product Detail Pages due to indexing delays. Server-Side Rendering (SSR) ensures bots see content immediately but can slow down Time to First Byte (TTFB).

Modern frameworks are moving toward Partial Hydration or Island Architecture to optimize Core Web Vitals by reducing JavaScript execution time on the main thread, directly improving Interaction to Next Paint (INP) scores. Optimize your JavaScript bundle sizes with code splitting and tree shaking, and ensure hydration errors aren’t present in your browser console on first page load—these indicate mismatches between server and client-rendered HTML that make content invisible to Googlebot.

Core Web Vitals and User Experience Signals

Core Web Vitals have become essential ranking factors that directly impact your site’s search performance and user satisfaction. These metrics measure critical aspects of page experience, and optimizing them is non-negotiable for any technical SEO strategy in 2026.

Understanding the Three Core Web Vitals

Core Web Vitals consist of three key metrics that Google uses to evaluate page experience. LCP (Largest Contentful Paint) measures how quickly your main content loads—typically your hero image or primary product photo for e-commerce sites. INP (Interaction to Next Paint) has replaced the older FID metric and now assesses how responsive your page is to all user interactions throughout their entire visit. Finally, CLS (Cumulative Layout Shift) tracks visual stability, ensuring elements don’t unexpectedly move around as the page loads.

Understanding these metrics is the first step toward improving your page experience signals and maintaining competitive search rankings.

Optimizing for LCP and Load Speed

Website speed remains a critical ranking factor that influences both search visibility and user experience. To optimize LCP effectively, implement fetchpriority="high" for your largest contentful element to prioritize its loading. Consider standardizing on AVIF image formats with WebP fallbacks for product images, as this significantly reduces load times. For long pages with below-the-fold content, apply content-visibility: auto to off-screen elements to reduce unnecessary rendering work and improve overall page performance.

Google’s PageSpeed Insights tool provides detailed analysis and actionable recommendations for improving your page speed across performance, accessibility, best practices, and SEO dimensions.

Mastering INP for Responsiveness

INP measures how quickly your page responds to user interactions, with a good score being 200 milliseconds or less. Scores above 500ms are considered poor and will negatively impact your rankings. To optimize INP, address three key areas: input delay, JavaScript processing time, and presentation delay (layout calculations and pixel painting).

Engineering solutions for INP include using scheduler.yield() to allow JavaScript tasks to yield control back to the main thread, preventing the browser from becoming overwhelmed. Additionally, implement debouncing on input handlers—such as search bars—to prevent excessive event firing and maintain smooth user interactions throughout the page experience.

Structured Data and the Language of Entities

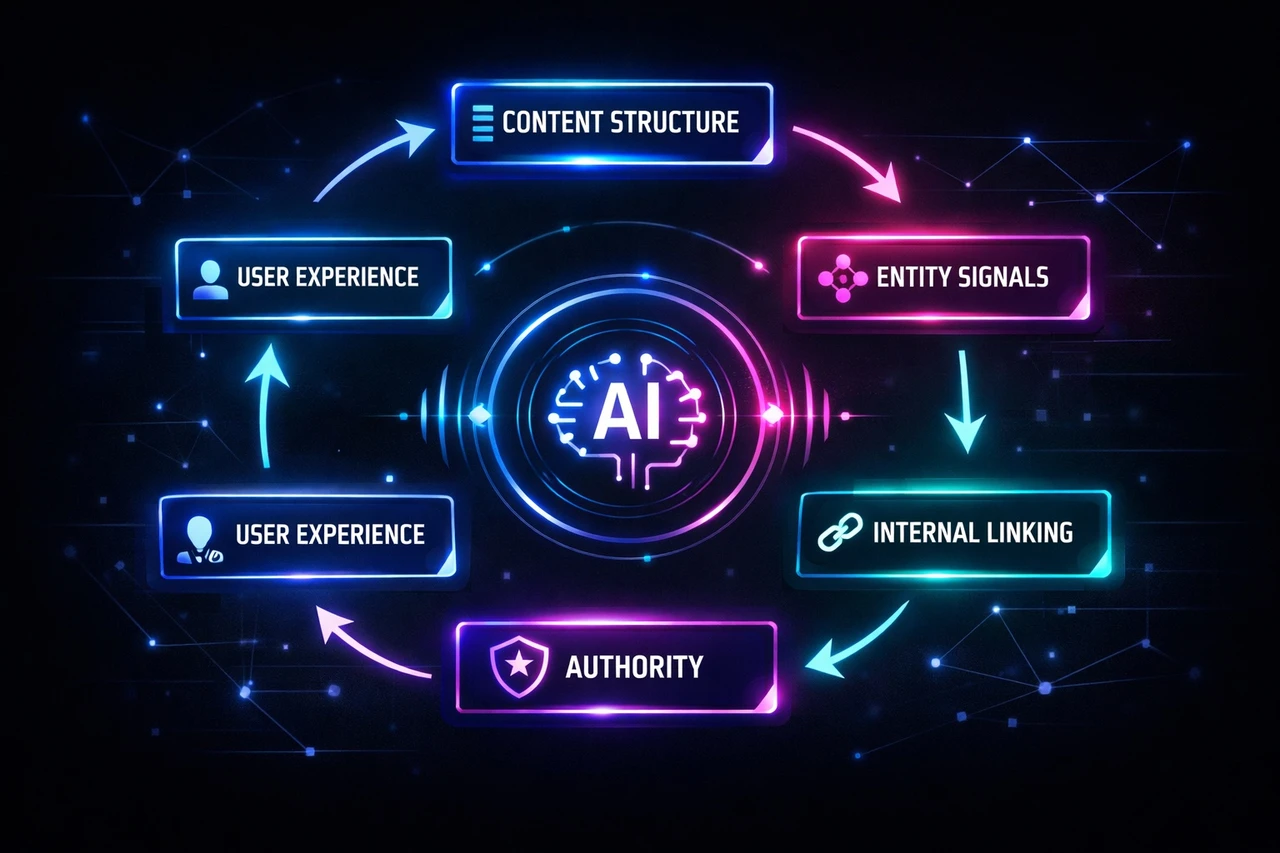

Understanding Structured Data’s Role in Modern SEO

Structured data—or schema markup—is no longer just about getting rich snippets in search results. It’s become the primary language that search engines and Large Language Models (LLMs) use to understand entities on your pages. By implementing Schema.org markup, you’re essentially translating your content into a format that both Google and AI systems can comprehend at a deeper level.

The most effective format for structured data is JSON-LD, though Microdata and RDFa are also valid options. This markup helps search engines index and categorize your pages correctly while capturing valuable SERP features like featured snippets, reviews, and FAQ sections. Beyond rankings, structured data contributes to semantic search and strengthens your E-E-A-T signals—expertise, experience, authoritativeness, and trustworthiness.

Critical Updates for 2025/2026 Compliance

Google has significantly tightened structured data requirements for e-commerce sites. Merchant Center now mandates specific properties like shippingDetails and hasMerchantReturnPolicy in your structured data. The returnPolicyCountry field must use strict ISO 3166-1 alpha-2 country codes. If you can’t implement product-level data, organization-level structured data serves as a global fallback for return policies.

To establish credibility, use the SameAs property in your Organization schema to link verified profiles on LinkedIn, Crunchbase, or Wikipedia. For blog content, implement ProfilePage schema for author bios to reinforce expertise signals.

Preventing Schema Drift and Testing Your Markup

One critical issue to avoid is “Schema Drift”—when your JSON-LD data contradicts what’s actually visible on the page. Google penalizes this mismatch. Implement automated testing pipelines using tools like Puppeteer or Cypress to catch these discrepancies before they impact your rankings.

Always validate your structured data using Google’s Rich Results Test or Classy Schema. These tools reveal which rich results your content is eligible for and identify any invalid markup that violates Google’s guidelines. Regular audits through your site audit tool’s Markup report will catch issues preventing your content from appearing in enhanced search features.

Optimizing for Generative AI Systems

As AI Overviews reshape search, structured data takes on new importance. Generative Engine Optimization (GEO) focuses on formatting content for LLMs. Use the “BLUF” (Bottom Line Up Front) method and HTML definition lists (<dl>, <dt>, <dd>) to optimize for Retrieval Augmented Generation systems—definition lists are 30-40% more likely to be cited by LLMs. Maintain proper semantic hierarchy with distinct H2 tags and H1-H6 structure so AI models understand your content organization.

Generative Engine Optimization (GEO)

As search evolves beyond traditional rankings, Generative Engine Optimization (GEO) has become a critical component of your technical SEO strategy. GEO is the art of formatting content so it can be easily “ingested” and “reconstructed” by Large Language Models (LLMs) like GPT-5 and Gemini. This shift represents a fundamental change in how you need to structure and present information for modern search engines.

Optimizing for AI-Powered Search

The foundation of GEO lies in understanding how AI engines retrieve and synthesize information. Modern AI systems use Retrieval Augmented Generation (RAG) to search vector databases for relevant text “chunks” and generate answers. This means your content must be easily chunked and logically organized. Gone are the days of burying your key insights in lengthy paragraphs—AI models need immediate, digestible information.

Implement the BLUF Method (Bottom Line Up Front) by structuring your content so the core answer appears in the first sentence. This approach aligns perfectly with how LLMs process and prioritize information. Additionally, use HTML definition lists (<dl>, <dt>, <dd>) for specifications and technical details. Research shows that LLMs are 30-40% more likely to cite definition list formats than standard paragraphs, making them invaluable for technical content.

Structuring Content for AI Citations

In AI Overviews, there are no traditional rankings—only citations. Your content must serve as a “grounding truth” that AI systems can confidently reference. Create dedicated “Specs” or “Data” sections with distinct H2 tags to highlight numerical information. AI models actively look for sections with high numerical density, so grouping statistics and data points together increases your chances of being cited.

Finally, maintain strict semantic hierarchy using H1-H6 tags throughout your content. This structured approach helps AI understand parent-child relationships within your information architecture, making it easier for LLMs to extract, contextualize, and cite your content accurately. By optimizing for GEO today, you’re future-proofing your technical SEO strategy for the next generation of search.

International and Real-Time Indexing

Mastering Hreflang Tags for Global SEO Success

If you’re running an international website, hreflang tags are non-negotiable. These critical elements tell search engines which language and regional version of your content to serve to different users. However, hreflang implementation is notoriously fragile—and that’s where most sites stumble.

The golden rule? Every page must contain a self-referencing hreflang tag. If your English page links to a German version, that German page must link back to the English one. Missing return tags are the #1 cause of hreflang failure. Additionally, always implement hreflang="x-default" for your language selector page or global homepage to catch users whose language preferences don’t match your specific regional versions.

Beyond basic implementation, watch out for common hreflang pitfalls: missing or conflicting attributes, incorrect values, language mismatches, and broken hreflang links. These issues prevent your international audience from seeing the right content and can tank your global SEO ROI. Hreflang problems directly impact your ranking factors, as clusters of multi-language pages share ranking signals. Use your SEO platform’s International SEO or localization audit tools to identify and fix these issues systematically.

For additional optimization, consider your URL structure. Some sites benefit from country-specific paths (like domain.com/ca for Canada) or country-code domains (like domain.ca), which make regional targeting even clearer to search engines.

IndexNow: The Push Protocol for Real-Time Indexing

While Google relies on crawling to discover content changes, other major search engines operate differently. IndexNow is a push protocol used by Bing, Yandex, and even ChatGPT that instantly notifies search engines when your URLs change.

This distinction matters enormously for e-commerce sites with rapidly fluctuating inventory and pricing. Without IndexNow, price updates might take days to reflect in Bing or ChatGPT results. With it, changes can appear within minutes. Since these alternative search engines represent nearly 30% of the search market, missing real-time indexing is a significant oversight.

The good news? Most modern CDNs offer one-click IndexNow integration, making implementation straightforward. Adding IndexNow to your technical SEO checklist ensures your content stays fresh across all major search engines, not just Google.

Conclusion: Mastering Technical SEO for 2026

The New Paradigm: Building for Machines, Not Tricking Them

Technical SEO in 2026 isn’t about gaming the system—it’s about engineering transparency with the machines that power search discovery. The old playbook of “tricking” crawlers is dead. Instead, the focus has shifted to ensuring your site is genuinely understood by AI agents and search engines. This fundamental mindset change means treating your website as a communication tool designed for both human users and intelligent algorithms. When you prioritize clarity and accessibility for search engines, you’re not compromising user experience—you’re enhancing it.

Structured Data: Your Competitive Advantage

One of the most powerful applications of this philosophy is treating your e-commerce site as a structured data feed rather than just a visual storefront. This approach positions your brand to win on two fronts: higher search rankings and featured answers in search results. By implementing proper structured markup, you’re giving search engines the context they need to understand your products, services, and content at a deeper level. This isn’t just about rankings—it’s about visibility and authority.

The Bigger Picture: Sustainable Growth Through Technical Excellence

Technical SEO drives sustainable organic traffic while simultaneously enhancing user experience. When your site is properly optimized from a technical standpoint, visitors enjoy faster load times, better navigation, and more reliable performance. This creates a virtuous cycle: happier users lead to higher conversions, which validates your SEO efforts and encourages continued investment.

To truly master technical SEO in 2026, don’t overlook the importance of effective reporting and monitoring. Tracking your progress, identifying bottlenecks, and refining your strategy based on data-driven insights ensures your technical SEO efforts remain aligned with your business goals.

Ready to elevate your technical SEO strategy? Contact us to learn how our team can help you implement a comprehensive technical SEO approach that drives real results.

This guide was written by Scopic Studios and reviewed by Assia Belmokhtar, SEO project manager at Scopic Studios.

Note: This blog’s images are sourced from Freepik.